Your AI Needs Better Citations

We recently asked researchers, information professionals, and academics a simple question: Do you trust LLM answers without citations? 88% said no. When we asked what matters most when using generative AI, accuracy and trustworthiness topped the list, followed closely by citation quality and source verification.

Those numbers tell you something important. We're past the experimental phase. These tools have crossed into mainstream professional use. The adoption has already happened. But the trust hasn't caught up, and that gap reveals exactly what's missing. In research environments, provenance matters. Context matters. The ability to verify claims, trace reasoning back to sources, and distinguish between established findings and speculative claims aren't nice-to-have features. They're requirements.

The thing is, we've solved versions of this problem before.

PageRank Wasn't About Pages, It Was About Trust

When Google launched in 1998, they asked a simple question: How do scholars figure out which papers matter? Citations. If researchers keep referencing your work, you're probably onto something. PageRank applied this insight to web pages. A link became a vote of confidence , weighted by authority. The algorithm didn't just count connections but weighted them based on authority.

Fast forward to 2026, and we're watching LLMs consume vast amounts of text without this kind of quality filter. They're pattern-matching machines, incredible at prediction but terrible at verification. When you ask ChatGPT about trisomy increasing chromosome mis-segregation rates in cancer cells, it might give you a fluent answer. But where's that answer coming from? Which papers support it? Which challenge it?

The Reproducibility Crisis Meets The AI Revolution

The problem gets messier when you consider reproducibility. Back in 2012, researchers tried to replicate 53 landmark cancer studies. They succeeded with only six. That's an 89% failure rate for supposedly groundbreaking research. Similar studies at Bayer showed only 36% of key findings could be validated.

This reproducibility crisis revealed something crucial: citation counts alone don't tell you much about research quality. Now imagine feeding all that literature into an LLM. The model sees the text, learns the patterns, but it can't distinguish between a study that's been independently verified and one that's been quietly disputed by subsequent research. It'll weave both into confident-sounding responses.

Scite's Answer: Make Every Citation Tell Its Story

Scite started working on this problem years before ChatGPT made everyone panic about AI trustworthiness. Their approach: extract and classify citation statements at scale. When paper B cites paper A, what's the context?

With over 1.3 billion citation statements indexed, it's not just metadata about which papers cite which. It's the actual sentences where citations appear, classified by how they're being used. When a researcher says "contrary to previous claims by Smith et al.," that's valuable information. When another writes "as conclusively demonstrated by Jones and colleagues," that tells a different story.

The technical pipeline here matters. Scite has agreements with about 40 publishers to access full-text articles, paywalled and open access. They use machine learning models (11 for extraction, one for classification) to process this content systematically. The result is what they call Smart Citations: citation context that shows that an article was cited, as well as how and why.

Connecting The Dots: MCPs & The Future Of Research Discovery

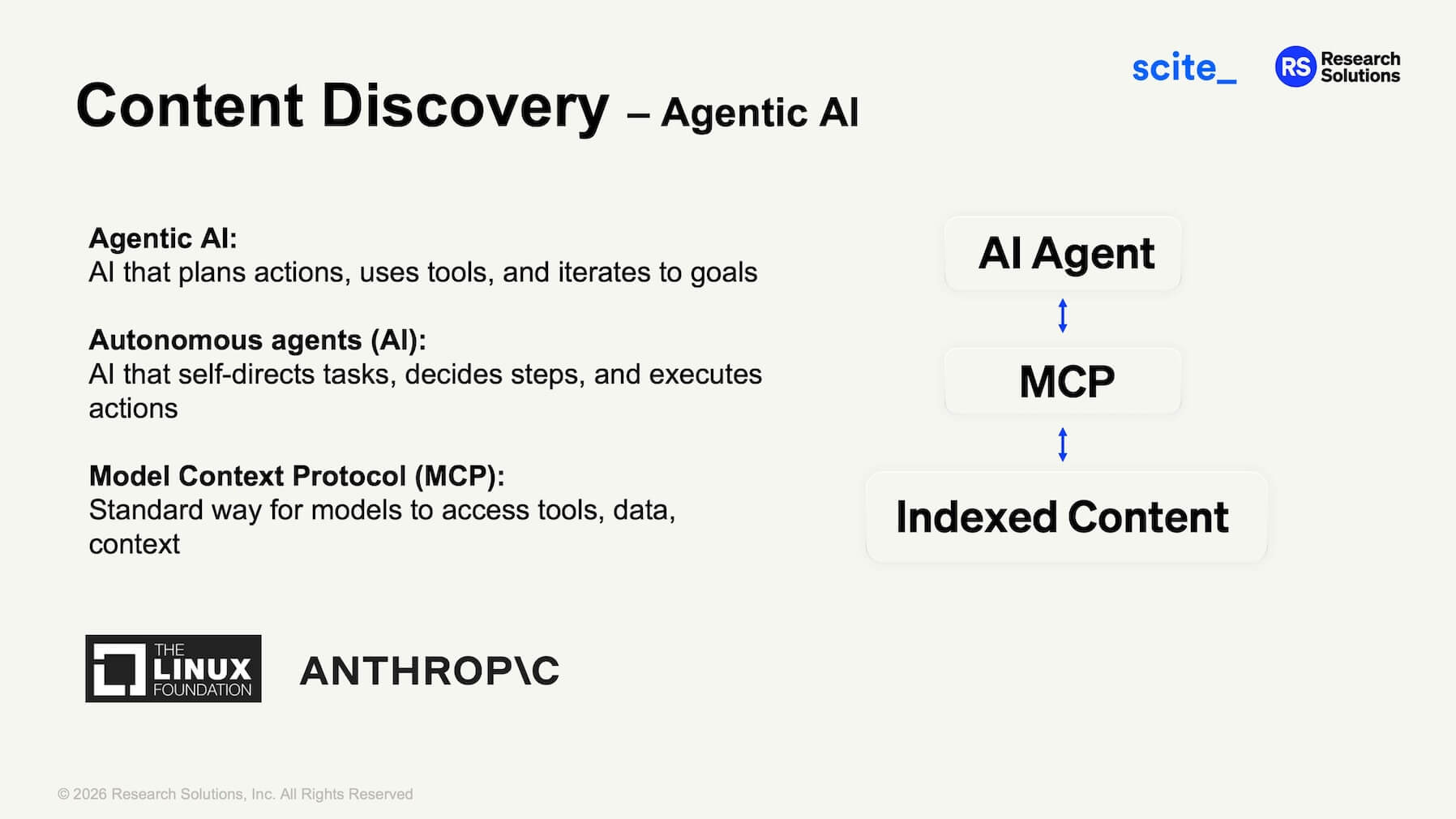

The newest piece of this puzzle is something called Model Context Protocol (MCP). Anthropic introduced MCP in late 2024, and it quickly gained traction across the tech industry and broader AI community as a standard way for AI agents to connect with external tools and data sources.

The shift happening now is from simple question-and-answer LLMs to more autonomous agentic AI systems that can plan workflows and execute tasks. Imagine asking an AI to research a topic. Instead of just searching the web, it could query scholarly databases, access full-text articles (with proper permissions), cross-reference findings, and synthesize results while showing you the citation context.

The real power comes from combining multiple information sources in a single query. Maybe you need insights from published research alongside internal company documents. Or you want to cross-reference academic findings with patent data and clinical trial results. With MCP-enabled agents, you can assign different connectors to different information types, letting the AI interrogate all of them simultaneously and synthesize a comprehensive answer.

The workflow looks something like this: you ask your question, the AI agent calls the relevant connectors (scholarly literature, internal documents, specialized databases), retrieves and analyzes the information, then delivers a synthesized answer with proper citations and access links. Where institutional subscriptions exist, articles can be accessed automatically. Where they don't, document delivery can fill the gaps. The agent can even use deep research models on full-text articles and supplementary materials to extract more nuanced insights.

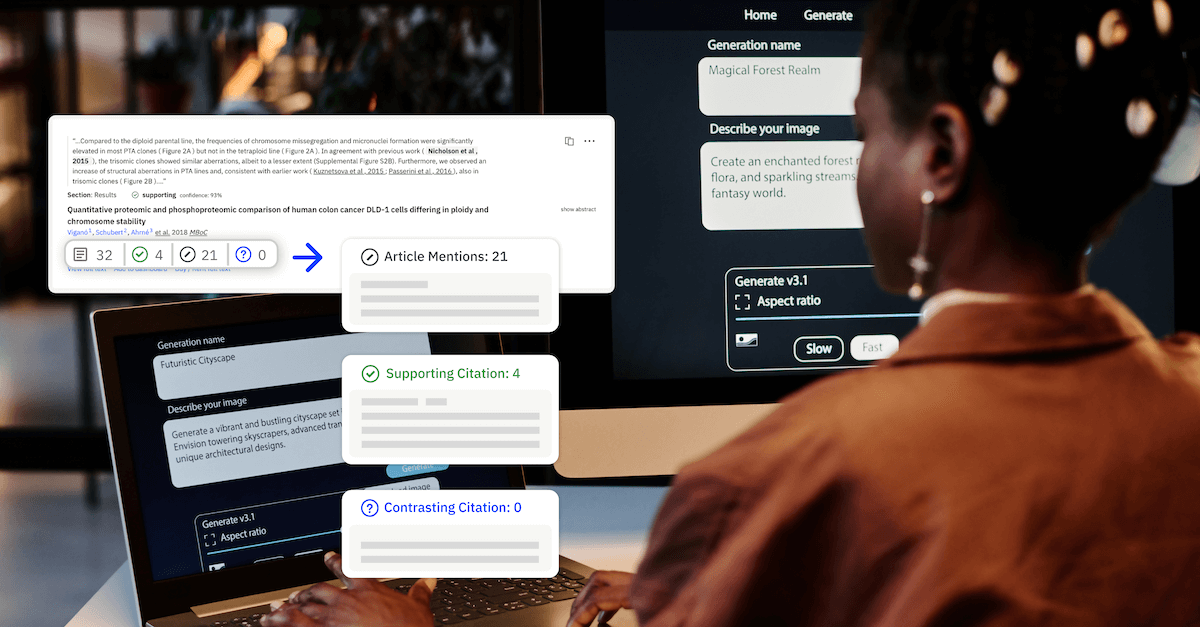

Scite has built an MCP connector that brings their citation intelligence into tools like Claude and ChatGPT. When you ask a research question with the Scite connector enabled, the LLM doesn't just retrieve random papers. It searches through indexed scholarly content, including full-text access where partnerships allow, and returns answers with real citation statements showing supporting or contrasting evidence.

In a recent demonstration, Claude answered a technical question about trisomy in cancer cells. The model called the Scite search function, investigated multiple papers, retrieved full text where available, and synthesized an answer with proper citations. You could see the thinking process: which papers it searched, why it chose certain sources, what the actual citation statements said.

Why This Matters Beyond Individual Researchers

Universities and organizations are racing to deploy AI systems. But these systems need reliable connections to scholarly content. Not just open access papers, but the full landscape of research including subscription journals. And they need more than access. They need citation intelligence to weight sources appropriately.

There's also a measurement problem. When students and researchers use generic AI tools for discovery, that usage is invisible. Library subscriptions get justified by usage statistics, but if people are finding and reading about research through ChatGPT instead of going directly to articles, those numbers tell an incomplete story.

Scite's MCP infrastructure tracks these AI reads. Which journals get queried? Which articles do LLMs pull from? This matters for publishers trying to understand their content's value and for institutions making subscription decisions. The research isn't only being read by humans anymore.

Citation Needed (Again)

The difference between helpful AI and unreliable AI often comes down to citations. Real ones, properly contextualized, showing you what exists and how it's been received by other scholars. It's the same mechanism that made Wikipedia work and Google dominant.

Remember that 88%. The overwhelming majority who said they don't trust LLM answers without citations aren't being cautious for the sake of it. They're recognizing something fundamental about how knowledge works in professional contexts. You can't build reliable conclusions on invisible foundations. You can't verify what you can't trace. And you can't distinguish good research from bad when everything sounds equally authoritative.

The infrastructure is being built to bridge that gap. Whether institutions, publishers, and AI providers choose to implement it will determine whether these tools become trusted research partners or sophisticated misinformation engines.