What It Looks Like When An AI Research Assistant Shows Its Work

The "no AI" policy ship has sailed. Faculty members across disciplines have spent the past two years watching students submit papers with suspiciously smooth prose and nonexistent citations, leading to a game of academic whack-a-mole that benefits no one. Meanwhile, researchers in the same institutions use AI tools daily to accelerate literature reviews and identify research gaps.

This contradiction puts everyone in an awkward position. Students receive mixed messages about when AI assistance crosses from helpful to harmful. Librarians field questions about AI detection tools while wondering if they should be teaching evaluation skills instead. And professors revise syllabi for the third time this semester, trying to draw clear lines in perpetually shifting sand.

This isn't about whether AI belongs in academic research. It's already there. What matters is how we use these tools responsibly, and that starts with understanding where they fail spectacularly.

The Citation Fabrication Problem

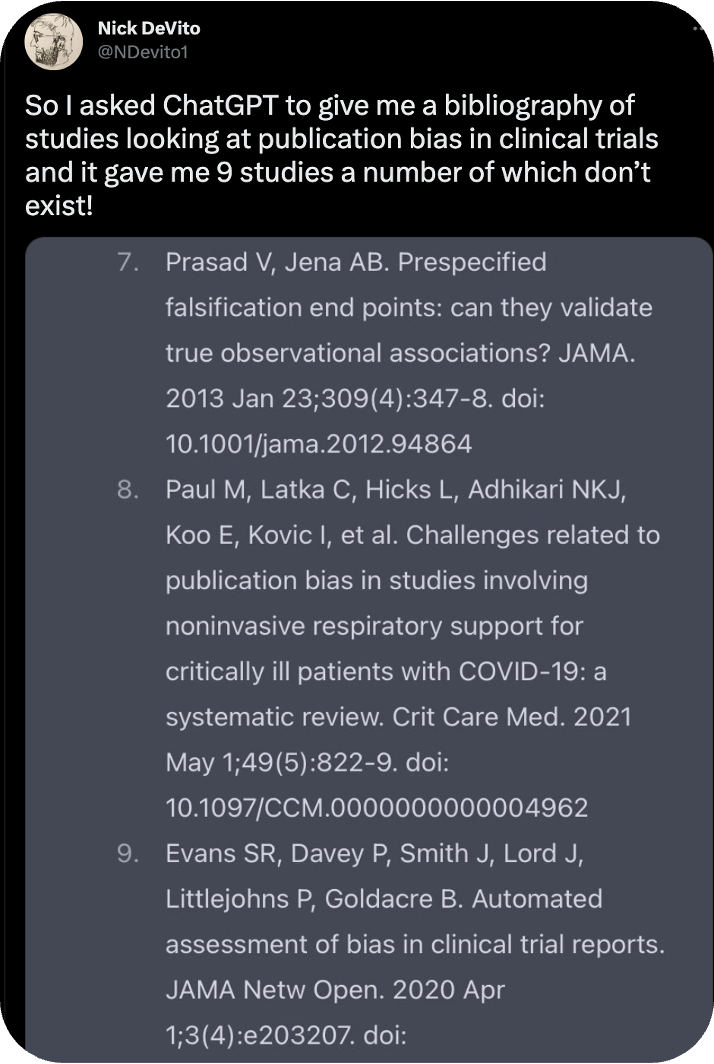

Here's where most AI tools stumble badly: they make things up. Not occasionally or rarely. Consistently enough that you can't trust them without verification. Large language models predict text based on patterns, not facts. When you ask ChatGPT for sources on publication bias in clinical trials, it might cite "Prasad V, Jena AB. Prespecified falsification end points: can they validate true observational associations? JAMA. 2013 Jan 23;309(4):347-8."

Sounds legitimate. Except that paper doesn't exist.

You've probably heard this called a “hallucination.” The term works, but it makes the process sound more mysterious than it is. The model learned that citations follow certain patterns (author names, journal abbreviations, volume numbers) and generates text that matches those patterns. It has no concept of whether Prasad and Jena wrote that paper, or whether JAMA published it in 2013.

For researchers, this creates an obvious problem. You can't build an argument on fabricated sources. Even one fake citation undermines your credibility and potentially derails your entire project. Yet the alternative (avoiding AI tools entirely) means ignoring genuinely useful capabilities for exploring unfamiliar topics, identifying key terminology, and connecting concepts across disciplines.

A Different Architecture For Research AI

Some tools have started approaching this problem differently by separating what AI does well from what it does poorly.

Take Scite Assistant as an example.

The large language model reads your question and determines what information you need. But instead of pulling answers from its training data, it searches Scite's database of scholarly literature, including both open access and paywalled sources from publisher partnerships.

The distinction matters more than it might seem. When you ask about social media's effects on adolescent mental health, the system composes search queries, runs them against real papers, and builds responses from actual published research. Every claim links back to specific passages in identified sources. You can hover over any citation to see the exact text that supports that point.

This transparency shifts the tool from mysterious black box to auditable research assistant. You're not trusting the AI to be right; you're using it to efficiently locate relevant sources you can then evaluate yourself. The research process stays in your hands. The AI just accelerates the parts that used to mean hours of database searches and Boolean operators.

It's worth noting that this approach significantly reduces hallucinations, though it doesn't eliminate them entirely. The system still occasionally suggests citations that don't fully support the claim being made. But because you can see the actual source text, catching these mismatches becomes straightforward.

Taking Control Of Your Results

You have significant control over how Scite Assistant behaves. Click the gear icon in the Assistant window to access the settings panel.

From here, you can specify year ranges to focus on recent literature, filter by publication types (clinical trials, literature reviews, etc.), or limit searches to specific journals. If you need results only from papers you've already identified, you can paste in document identifiers or pull from a dashboard collection you've built in Scite.

The settings also let you choose your citation style (APA, MLA, IEEE, and others) and work with non-English language sources. You can tell Assistant to respond in a given language or search for papers published in specific languages.

These controls matter because they let you narrow broad topics and ensure the results match your specific requirements. A psychology student writing in APA style with a five-year recency requirement can set those parameters once rather than filtering manually through everything Assistant finds. Once you've got your results, though, the next challenge is making sense of them all.

Making Sense Of Twenty Open Browser Tabs

One capability addresses a specific pain point that anyone who's written a literature review will recognize: organizing dozens of sources by the specific questions your analysis requires. Some AI research tools, including Scite, offer table modes that create structured lists of sources with summaries of what each paper says about your question, rather than generating paragraphs of prose.

This becomes especially useful for assignments requiring balanced perspectives. You can ask the system to add columns that capture specific dimensions (like whether each source identifies benefits of social media use from the example before). The AI reads through your results and populates that column based on what the papers actually say. You end up with an annotated bibliography sorted by the criteria your analysis needs.

For students, this beats the traditional approach of opening twenty browser tabs and trying to remember which paper made which argument. For researchers, it speeds up the early literature review phase when you're still mapping the terrain. Export the table as a CSV and pull it into whatever reference manager you prefer.

The key is that you're still reading the papers. The AI isn't writing your analysis or making interpretive judgments. It's organizing source material so you can see patterns and gaps more clearly.

Academic Integrity Through Documentation, Not Detection

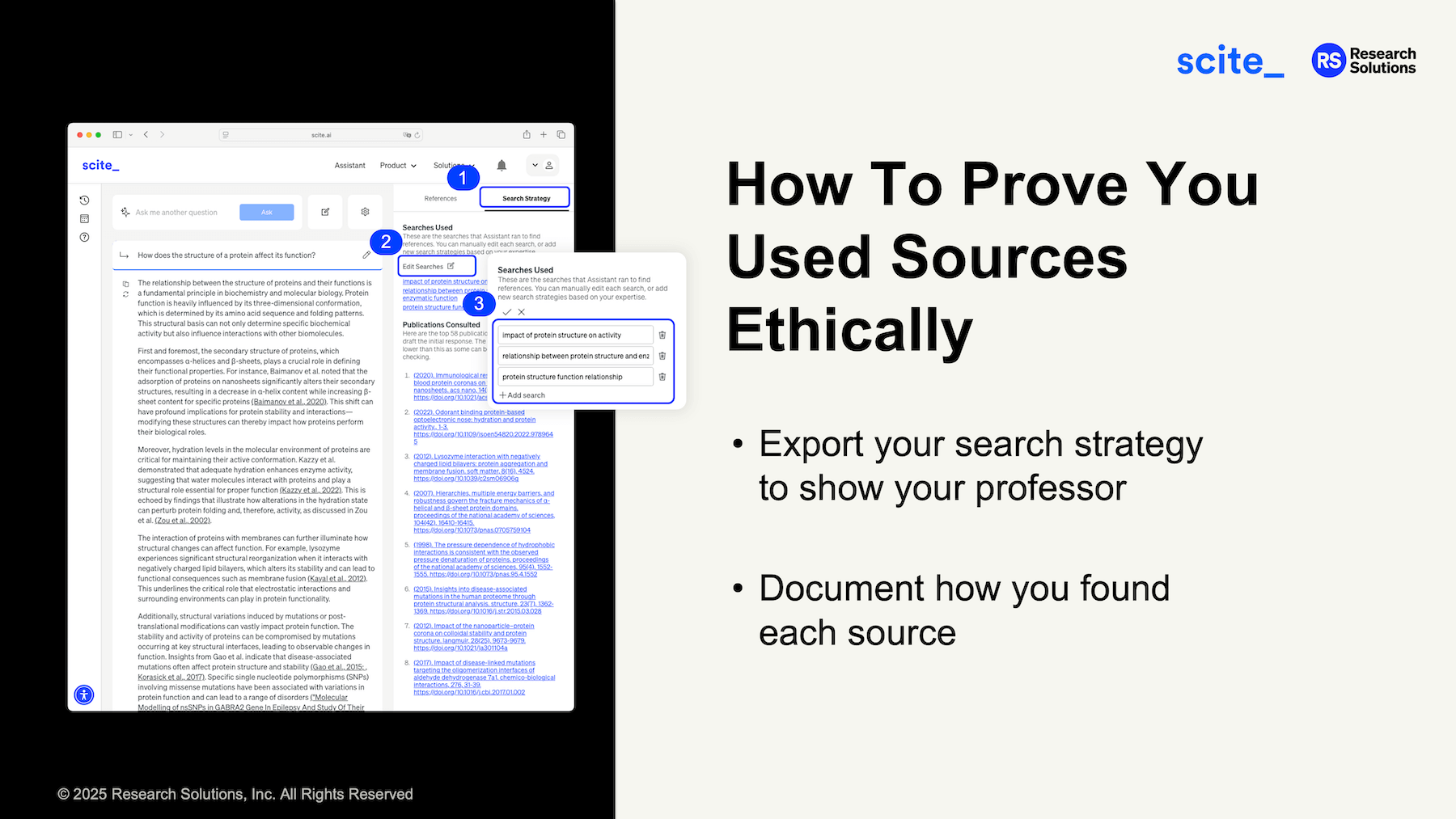

Here's what often gets lost in AI policy discussions: disclosure matters more than prohibition. When students (or researchers) use AI tools transparently and document their process, the conversation shifts from policing to pedagogy.

Better AI research tools build this transparency in. Features that show exactly which searches were run and allow users to export that information serve everyone better than AI detection tools, which frequently flag false positives and create adversarial relationships between students and instructors.

A professor requiring students to document their research process can ask for this documentation alongside the bibliography. The student demonstrates how they found sources rather than claiming they emerged fully formed from their own brilliance.

This kind of transparency also reflects how research actually works. Professional researchers document their methodology so others can evaluate and replicate their process. Teaching students to do the same prepares them for actual research practice rather than just satisfying assignment requirements.

The assumption shifts from "did you cheat?" to "how did you approach this problem?" That's a conversation worth having.

What This Means For Information Professionals

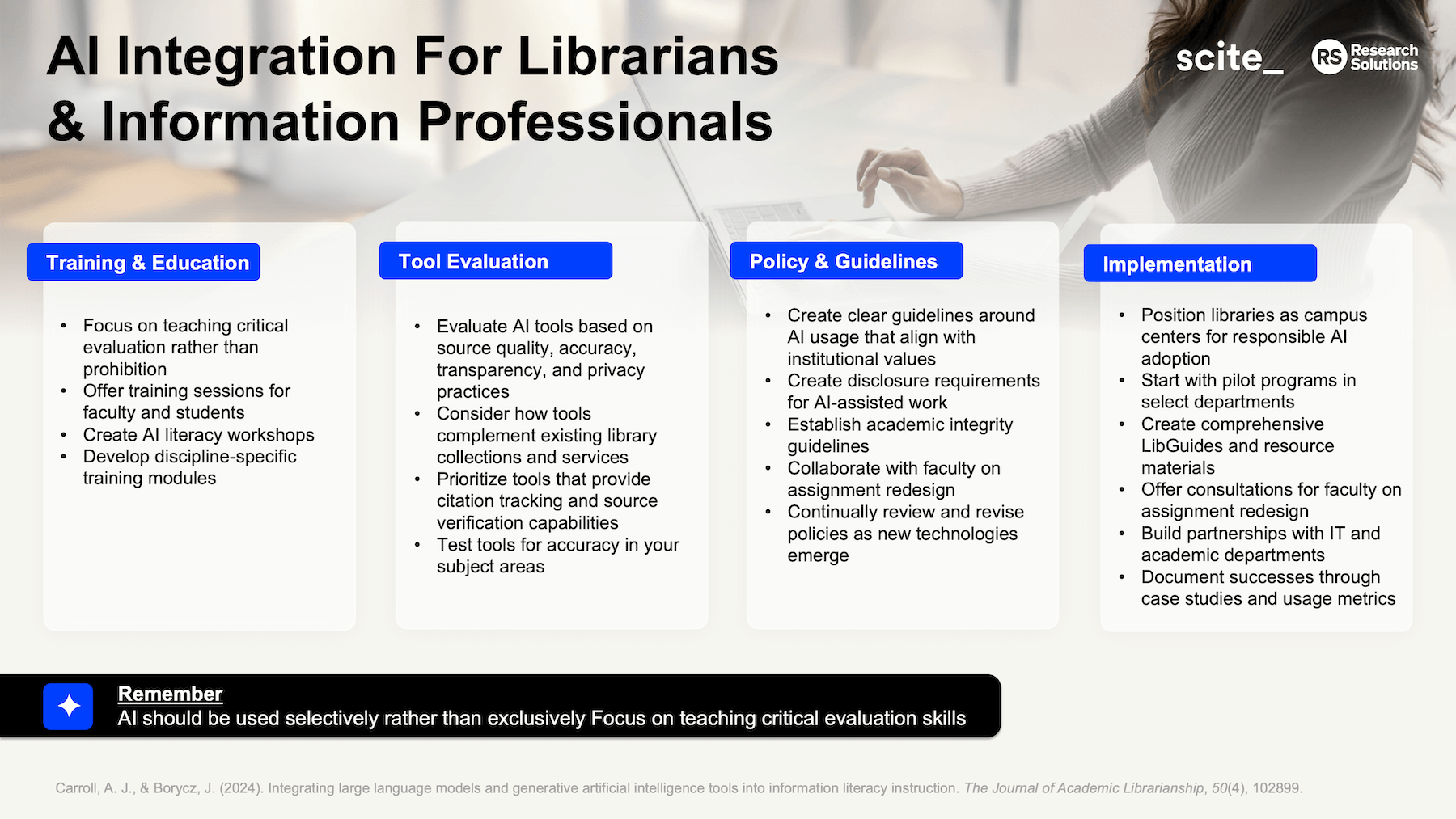

Librarians find themselves in a peculiar position with AI tools. They're expected to know about them, evaluate them, teach others how to use them, and somehow stay current as new tools launch weekly. All while maintaining traditional library services and managing shrinking budgets.

A practical approach: focus on evaluation criteria rather than specific tools. Teach people to ask whether a tool provides source verification, transparency about how it works, and compatibility with existing research workflows. These principles apply whether someone's evaluating Scite, another AI research tool, or whatever launches next month.

This also means positioning libraries as centers for responsible AI adoption rather than gatekeepers trying to prevent it. Offer workshops on AI literacy, create LibGuides that explain how different tools work, and partner with faculty on assignment design that incorporates AI thoughtfully. The goal isn't to be the AI expert who knows every tool. It's to apply existing information literacy skills to this new category of resources.

Some practical recommendations for librarians navigating this territory:

Start with pilot programs in select departments where faculty are already AI-curious. Document what works through case studies and usage metrics. This builds institutional buy-in better than campus-wide mandates.

Create comprehensive resource materials that students and faculty can reference when they need help. Include not just how to use specific tools but how to evaluate whether a tool is appropriate for a given task.

Offer consultations for faculty on assignment redesign. Many professors recognize their traditional assignments have become vulnerable to AI misuse but don't know how to restructure them effectively.

Partner with IT and academic departments rather than going it alone. The expertise needed to support AI literacy spans multiple domains, and libraries shouldn't shoulder this burden solo.

The Real Conversation We Should Be Having

AI tools won't replace thinking about research questions, evaluating arguments, or synthesizing ideas. What they can do is accelerate the mechanical parts of research (finding sources, identifying terminology, spotting connections) so you spend more time on the parts that actually matter.

The challenge is helping students understand that distinction. When you use AI to jumpstart your thinking on an unfamiliar topic, that's different from asking it to write your paper. When you use it to locate relevant sources quickly, that's different from trusting it to summarize those sources accurately.

These nuances matter. They're also harder to communicate than a blanket "no AI" rule. But they better reflect how research works and prepare students for environments where AI tools are standard equipment.

The institutions and instructors figuring this out now will have a significant advantage over those still fighting the last war about whether AI belongs in academic work. That question has been answered. The new question is how we teach people to use these tools well, with appropriate skepticism, proper verification, and genuine understanding of what they're doing.

The students graduating today will enter workplaces where AI research assistants are as common as word processors. We can either prepare them to use these tools thoughtfully, or we can send them out knowing only that their professors told them AI was cheating. One of those approaches serves them better than the other.

What makes the difference is teaching research as a process of inquiry rather than a box-checking exercise. AI tools should support that inquiry by removing friction from the mechanical parts: the database searching, the citation formatting, the initial literature mapping. But the core work of research (asking good questions, evaluating sources critically, building coherent arguments) remains human work.

The sooner we accept that AI tools are part of the research landscape and focus our energy on teaching people to use these tools well, the sooner we can move past unproductive policy debates and get back to what matters: helping people learn to think clearly, write persuasively, and engage meaningfully with scholarly literature.